|

5/16/2023 0 Comments Github airflow

The PythonOperator() refers to a method named update_github_events_data() and that method is figuring out the latest file in the S3 bucket and starts downloading all the complete days from that date onwards. The above sample is heavily shortened, so make sure to check the GitHub repository for the complete source. With a custom Dockerfile like this, the provider is installed as part of the build This is what the requirements.txt looks like You need to create a Dockerfile with a requirements.txt that contains the dependency to the Firebolt Provider. If you are running locally and testing with Docker, this is done in a few seconds.

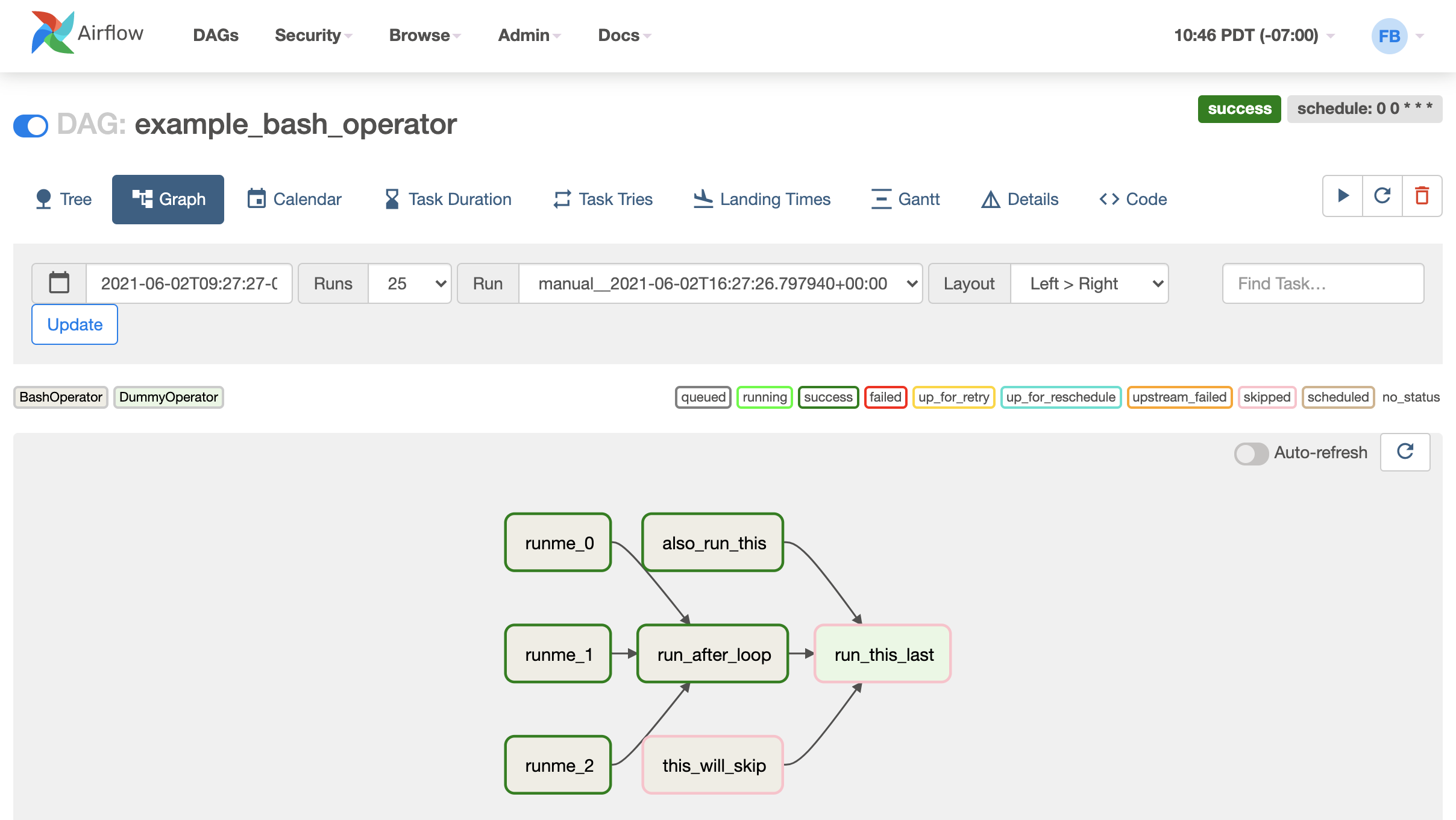

It is required to create a custom Docker Airflow image including the Firebolt provider.īefore we can test out our workflows, we need to extend the Apache Airflow base image with the Firebolt Airflow provider. Local Airflow development with Docker and the Firebolt provider For example the data in our S3 bucket is synced until three days ago, but the data in Firebolt is already three months old – this should not stop the synchronization into S3 in any way. Incremental update from the data in the S3 bucketīoth workflows are not tied or dependent on each other, as each workflow is supposed to figure out which data is required.If you prefer watching videos, check out the corresponding video on youtube.ĭoing an incremental update in our Firebolt database requires two different workflows. This allows for a start-execute-stop execution of a Firebolt engine to reduce costs if you only require a certain type of engine during workflow execution. The Firebolt provider contains operators that allow to start and stop engines as well as execute SQL queries against configured Firebolt connections. Firebolt has a nice Apache Airflow provider and we will use it in this blog post to have a full end-to-end integration for our GitHub dataset and ensure that incremental updates keep flowing in. Part 3: Incremental Updates with Apache AirflowĪpache Airflow is one of the heavily used orchestration tools in the data engineering industry.Part 2: Using Jupyter for data exploration.Part 1: Analyzing the GitHub Events Dataset using Firebolt - Querying with Streamlit.We will cover discovering the data using the Firebolt Python SDK, writing a small data app using the Firebolt JDBC driver as well as leveraging Apache Airflow workflows for keeping our data up-to-date. TLDR In this multi-series blog post we will take a look at the GithubArchive dataset consisting of public events happening on GitHub.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed